One of the interesting features of Windows Mixed Reality, specifically the HoloLens, is the ability to share the holographic experience across multiple devices. The programming requirements for holographic scene-sharing is discussed in detail in the Holograms 240 Course. A key component is the use of a World Anchor, which effectively defines a fixed position in the real world using Windows Mixed Reality API’s. A defined anchor can be shared across multiple HoloLens units. If the unit can locate the Anchor details with the onboard spatial information, a scene object with the associated anchor is automatically locked to the position.

A common question asked was how to simplify the Anchor placement for a user, especially in demo and presentation scenarios, with pre-defined images or markers to define a placement point instead of manual placement with user gestures in a dynamic environment, such as a crowded event demo area. Image Markers have been around a while and recent implementations support identifying the marker position in 3D world space beyond simple recognition and embedding string data.

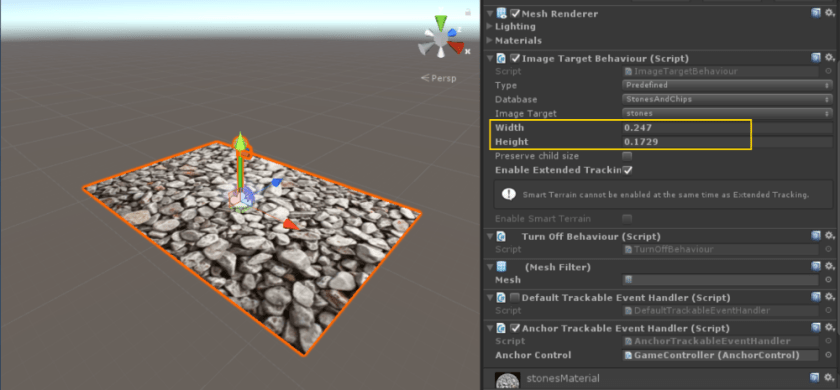

One of the available solutions for marker and 3D object tracking is Vuforia, listed on the Mixed Reality Development tools page and as add-on to Unity3D 2017.2+ versions. The Vuforia SDK supports marker tracking using the HoloLens PV camera and returns the marker’s position and orientation relative to the user. It’s a relatively simple approach to defining a real-world position and its SDK handles all image processing and tracking.

Using Placement Markers

Anchor management is simplified using the MixedRealityToolkit-Unity and typical setup in a holographic scene sharing project is Anchor Placement as the first action of the user. The process is to raycast the user’s gaze to a world surface and place a 3D hologram to mark the location with a gesture tap or click; this is the approach on the sample project HoloPlacementAndSharing where a placement point is defined with an anchor and then the anchor is shared to other devices.

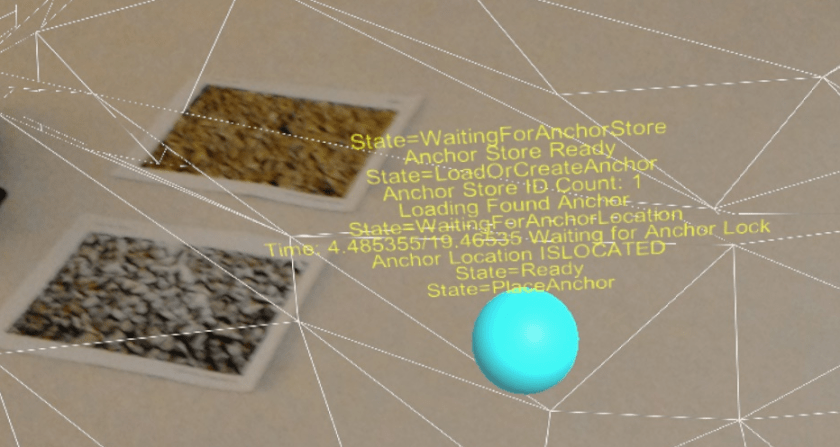

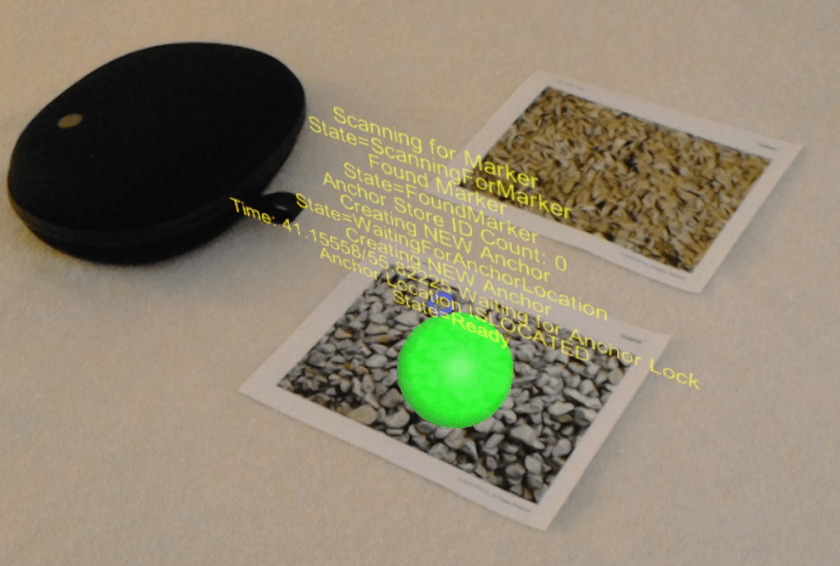

To simplify Anchor placement, printed markers or images can define the placement point. A major consideration is marker tracking does not need to be continuous, especially if the marker image is in a fixed location; all you need is to determine the image location, and the HoloLens Spatial tracking takes over.

There is further flexibility on how to process the marker. It can either be the actual placement point, or used as an offset when the marker is posted on a wall, or even the floor with a corresponding offset that is determined during development. In the example, the center of the image is used as the anchor point.

Implementing the Solution

- Setup a shared mixed reality experience with Anchor Sharing Setup such as the Holograms-240 Project (https://github.com/Microsoft/HolographicAcademy/tree/Holograms-240-SharedHolograms) from the Holographic Academy (https://github.com/Microsoft/HolographicAcademy) or the sample anchor sharing project HoloPlacementAndSharing

- Provide a scan for image marker option to supplement manual placement using the detected marker position as the anchor position instead of the raycast to the spatial map. An example implementation is presented in the HoloAnchorsWithMarkers

- Vuforia ImageTargets implement the ITrackabelEventHandler to handleOnTrackingFound() and retrieve the marker position and rotation in world space to use as the Anchor Location.

- When the anchor image marker is found, the Vuforia component and webcam can be turned off to minimize battery and cpu load; there is no need to continuously track the image as the HoloLens is tracking its position in World Space by default

Allow the user to implement anchor placement as needed either through manual gaze tracking or detecting the image.

if (foundAnchorInStore)

{

DisplayStatus("Loading Found Anchor");

worldAnchor = anchorStore.Load(AnchorName, anchoredObject);

}

else

{

DisplayStatus("Creating NEW Anchor");

worldAnchor = anchoredObject.AddComponent<WorldAnchor>();

}

Vuforia SDK’s default handling on Target Image detection is to display the children GameObjects and Colliders. The script AnchorTrackableEventHandler.cs based on (DefaultTrackableEventHandler.cs from the Vuforia SDK) implements ITrackableEventHandler with a minor modification to notify the anchor management script the image is found and sends its position in real world space.

private void OnTrackingFound()<span data-mce-type="bookmark" id="mce_SELREST_start" data-mce-style="overflow:hidden;line-height:0" style="overflow:hidden;line-height:0" ></span>

{

Renderer[] rendererComponents = GetComponentsInChildren<Renderer>(true);

Collider[] colliderComponents = GetComponentsInChildren<Collider>(true);

// Enable rendering:

foreach (Renderer component in rendererComponents)

{

component.enabled = true;

}

// Enable colliders:

foreach (Collider component in colliderComponents)

{

component.enabled = true;

}

Debug.Log("Trackable " + mTrackableBehaviour.TrackableName + " found");

if (anchorControl)

{

anchorControl.FoundMarker(transform.position, transform.rotation);

}

}

A complete Unity Project is available at the HoloAnchorsWithMarkers repository detailing anchor management and marker detection event handling. Note that it requires the separate installation of the Vuforia SDK and setting up a developer app key from the Vuforia. Sign-up with Vuforia for access to the SDK and create a key at the Vuforia Developer Portal (https://developer.vuforia.com).

Other Applications

We presented the general idea for supplementing World Anchor Placement with Vuforia Markers and provided projects on GitHub as a simplified guide for understanding Anchor Management, Sharing and utilizing additional technologies to track and detect real world items.

The Vuforia SDK also supports 3D object detection and embedding string data on image markers beyond image recognition to further enhance the Mixed Reality experience.

Resources

- HoloPlacementAndSharing– Anchor Placement and Sharing

- HoloAnchorsWithMarkers– Reference Code using Vuforia SDK

- https://github.com/Microsoft/HolographicAcademy

- https://github.com/Microsoft/HolographicAcademy/tree/Holograms-240-SharedHolograms

- Mixed Reality Toolkit-Unity

- Vuforia Developer Portal– Vuforia SDK and Developer Documentation

For questions, comments or contact – follow/message me on Twitter @rlozada

You must be logged in to post a comment.